Leveraging Quantum Transfer Learning for Enhanced Large Language Models

Introduction

What if training powerful AI models didn’t have to be slow, expensive, or data-hungry?

Large language model (LLM) optimization encounters significant hurdles, including high computational costs, long training times, and massive data requirements. Quantum transfer learning (QTL) offers a promising solution by utilizing quantum computing to enhance efficiency, reduce training time, and improve performance with smaller datasets. This advancement holds the potential to reshape natural language processing, making AI capabilities more accessible. But how exactly does QTL tackle these challenges? Let’s read this blog to understand its role in optimizing LLMs and unlocking new possibilities.

But before diving into its impact, it’s essential to learn the foundational concepts of Quantum AI innovations and their relevance to LLMs.

Understanding basic terminologies

Quantum transfer learning (QTL)

Quantum transfer learning merges classical machine learning with quantum computing mechanics’ unique principles—such as superposition and entanglement, to solve problems faster and more efficiently. Unlike traditional computing, which processes data sequentially, quantum system can explore multiple solutions simultaneously, drastically reducing training time.

But why does this matter for AI? In deep learning, classical models require enormous computational power to train on large datasets. QTL introduces variational quantum circuits (VQCs) instead of conventional long short-term memory (LSTM) cells, allowing neural networks to optimize and generalize with far fewer parameters. This shift has profound implications for large language model optimization (LLM).

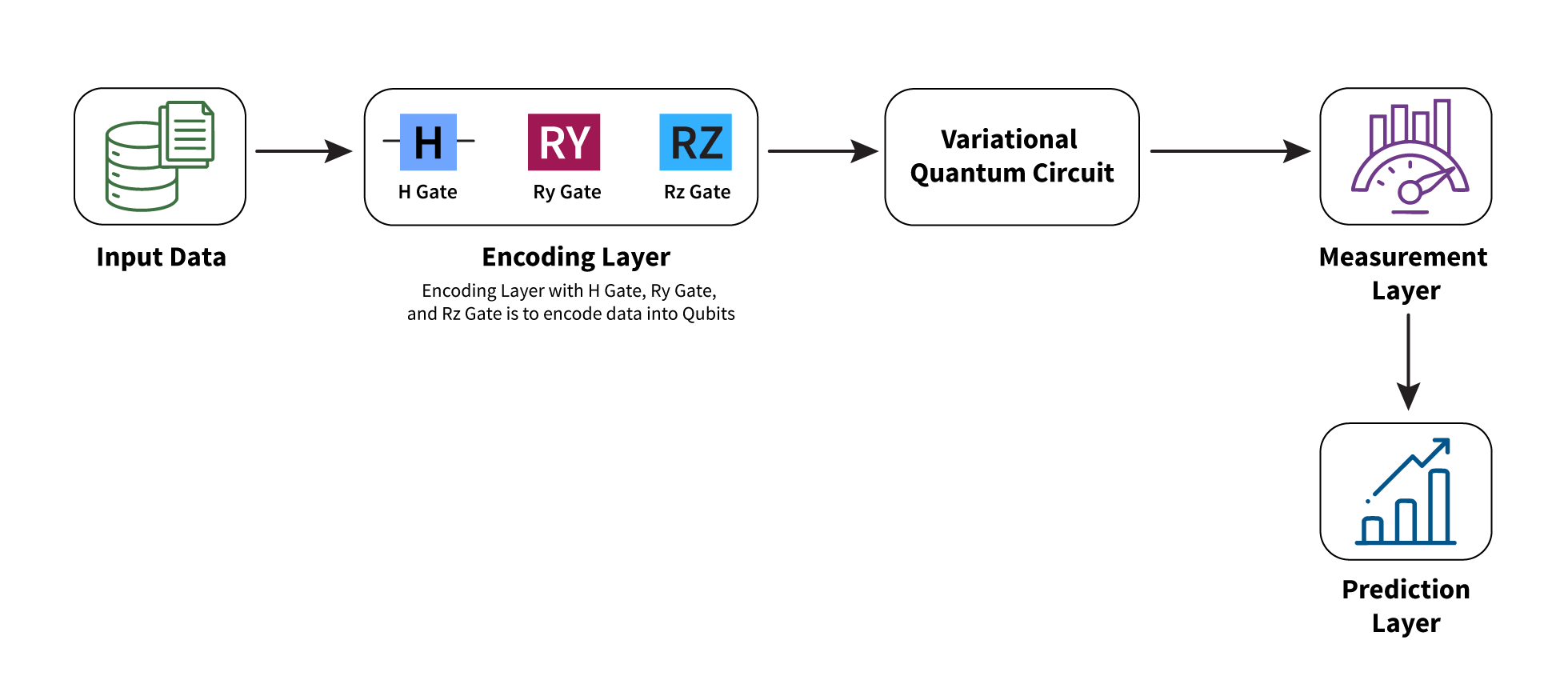

Here is a basic workflow of quantum transfer learning

Fig 1: Workflow of Quantum Transfer Learning

Large language models (LLMs) and the role of QTL

Large Language Models (LLMs) have transformed natural language processing, enabling applications like question-answering, summarization, and content generation. However, their growing complexity demands vast computational resources, making large language model optimization a key focus area. Quantum AI innovations, particularly QTL, offer a promising path forward. By integrating quantum-enhanced learning techniques, LLMs can process unstructured data more effectively, recognize patterns with greater accuracy, and achieve higher performance with smaller datasets. This synergy between quantum computing and deep learning has the potential to mitigate many of the inefficiencies that plague large-scale AI models today.

Challenges in current LLM models

While LLMs are transforming various aspects of our daily lives, their development is not without challenges. These models have broad application areas, including question answering, chatbots, summary generation, content creation, code generation, and more. Despite their advancements, LLMs face several challenges that hinder scalability and efficiency:

- Memory limitations

LLMs require extensive memory to process vast datasets, posing difficulties when deployed on resource-limited devices.

- Large model sizes

Storing LLMs demands significant storage, often in the hundreds of gigabytes, making deployment complex. Even advanced methods like model parallelism involve trade-offs.

- Scalability and bandwidth constraints

Scalability is crucial for LLMs and is often achieved through model parallelism (MP), which distributes the model across multiple machines. Distributed inference further supports large-scale tasks. However, MP’s efficiency can decrease when spread across nodes due to increased communication overhead.

The role of quantum transfer learning in LLMs

, we are establishing the use of variational quantum circuits (VQCs) to replace traditional long short-term memory (LSTM) cells. VQCs, a specialized type of quantum circuit, offer a novel approach to processing information. The core concept behind this innovation is the transfer of knowledge or information learned from one quantum task to another, even if the tasks are different. This transferability can significantly enhance the performance and accelerate the learning process for the target quantum task.

By leveraging the unique properties of quantum mechanics, VQCs can handle complex computations, driving progress in large language model optimization. This capability opens new possibilities for advancements in various fields, such as machine learning, optimization, and cryptography. The integration of VQCs into existing frameworks helps improve computational speed and provide a more robust and scalable solution for future quantum applications.

Moreover, the adaptability of VQCs allows for continuous learning and improvement, making them an ideal choice for dynamic and evolving tasks.

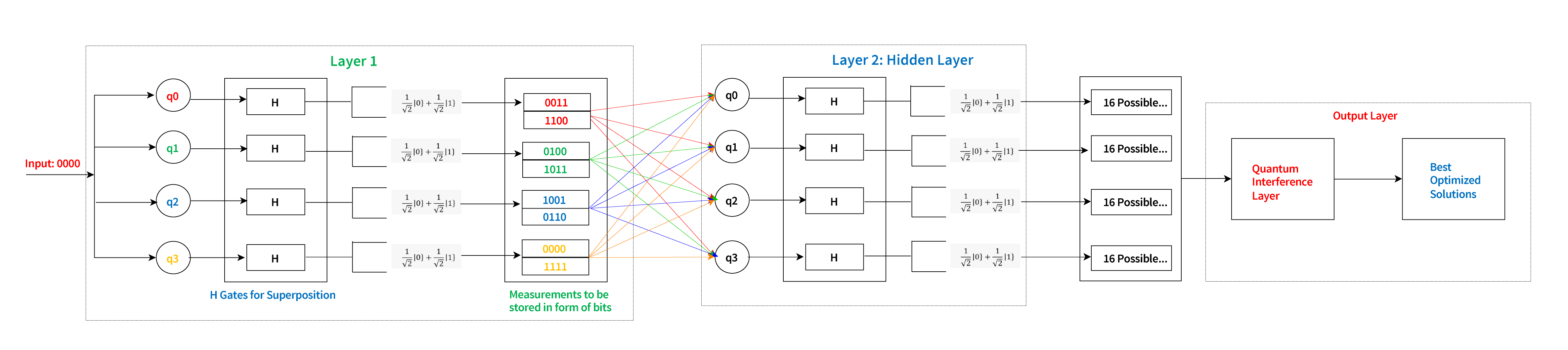

Here is the architecture of 4 Qubit Variational Quantum Circuit that we can use in training of our LLMs:

Figure 2: Architecture of 4 Qubit Variational Quantum Circuit

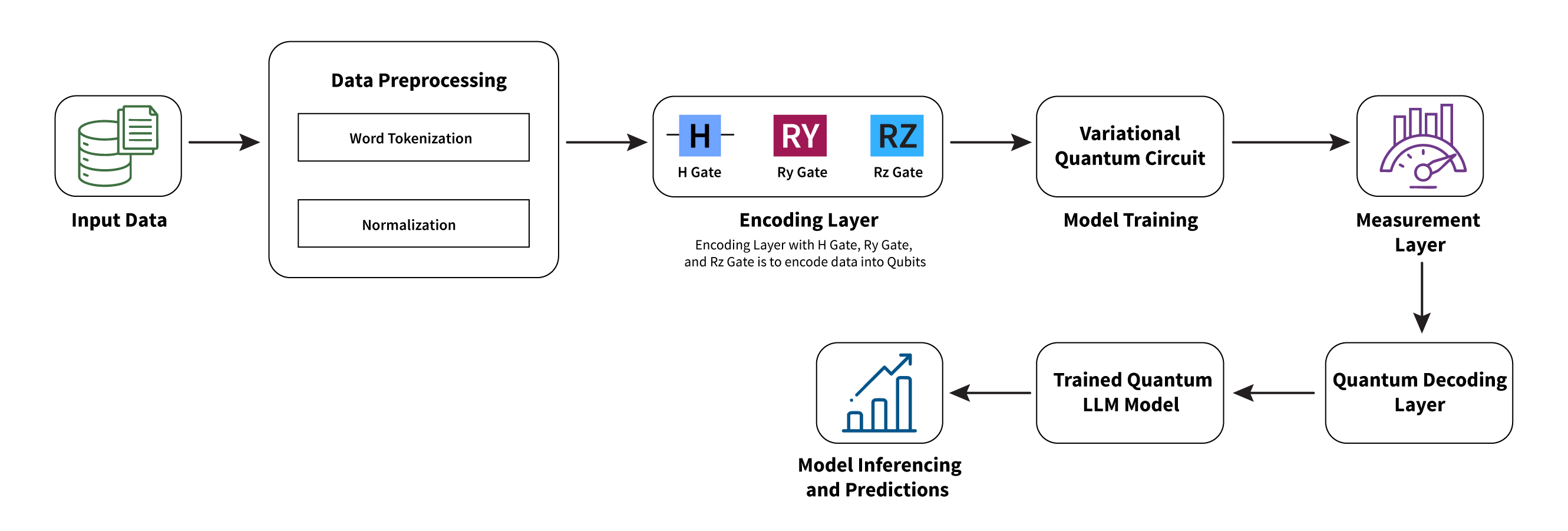

Below is the overall workflow and design explaining how we can use this above-mentioned VQC circuit in LLM training:

The architecture diagram for training a LLM using variational quantum circuits (VQCs) begins with input data, which consists of the raw data intended for training. This data is first processed by the preprocessing module, where it undergoes normalization and tokenization. The preprocessed data is then fed into the quantum encoding layer, which converts it into a qubit representation suitable for the VQC. The variational quantum circuits (VQCs) process this encoded data to generate output. Next, these outputs are passed on to the measurement layer, which identifies the best optimized solution. The optimized solutions are subsequently decoded back into a classical format by the quantum decoding layer. Finally, the processed data results in a trained large language model. This architecture demonstrates the interaction of each component to leverage quantum computing for the efficient training of LLMs.

Use case

One high-level use case of this architecture is in the development of advanced chatbots. By training a large language model using VQCs, the chatbot can achieve superior natural language understanding and generation capabilities. This results in more accurate and contextually relevant responses, enhancing user interactions and providing a more seamless conversational experience.

Advantages of using VQCs in LLM training

- Faster training: Quantum AI innovations accelerate LLM training through parallel computations and efficient optimization using algorithms like Quantum Approximate Optimization Algorithm (QAOA) and Variational Quantum Eigensolver (VQE).

- Improved optimization: Quantum-enhanced optimization techniques, such as quantum annealing, enhances model performance by exploring solutions more effectively.

- Efficient data handling: Quantum systems manage large datasets with greater efficiency, improving scalability.

- New architectures: Quantum computing inspires new LLM designs, enhancing their capabilities.

- Solving complex problems: Quantum AI innovations tackle challenges beyond classical capabilities, refining language understanding and reasoning.

Conclusion

Quantum computing has the potential to significantly improve large language models by addressing challenges such as high computational costs, lengthy training times, and the need for extensive datasets. Quantum transfer learning (QTL) leverages quantum computing to improve the efficiency and effectiveness of LLMs, reducing computational burden and accelerating training processes. This integration allows LLMs to achieve superior performance even with smaller datasets, transforming natural language processing and advancing the field of AI.

Citations

1 Exploring the Power of Quantum Transfer Learning, Rohit Narain, DataToBiz: https://www.datatobiz.com/blog/quantum-transfer-learning/

2 Don’t let AI Agents fail in production, Restack: https://www.restack.io/

3 Towards provably efficient quantum algorithms for large-scale machine-learning models, Junyu Liu, Minzhao Liu, Jin-Peng Liu, Ziyu Ye, Yunfei Wang, Yuri Alexeev, Jens Eisert & Liang Jiang, Nature Communications, January 10, 2024: https://www.nature.com/articles/s41467-023-43957-x

4 The Role of Quantum Computing in Future LLMs, Samarpit, Appypie Agents, May 5, 2025: https://www.appypieagents.ai/blog/role-of-quantum-computing-in-llms

5 Quantum transfer learning, Andrea Mari, Pennylane, November 6, 2024: https://pennylane.ai/qml/demos/tutorial_quantum_transfer_learning

Latest Blogs

Core banking platforms like Temenos Transact, FIS® Systematics, Fiserv DNA, Thought Machine,…

We are at a turning point for healthcare. The complexity of healthcare systems, strict regulations,…

Clinical trials evaluate the efficacy and safety of a new drug before it comes into the market.…

Introduction In the upstream oil and gas industry, drilling each well is a high-cost, high-risk…